The tool, called Nightshade, messes up training data in ways that could cause serious damage to image-generating AI models. Is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission.

ARTICLE - Technology Review

ARTICLE - Mashable

ARTICLE - Gizmodo

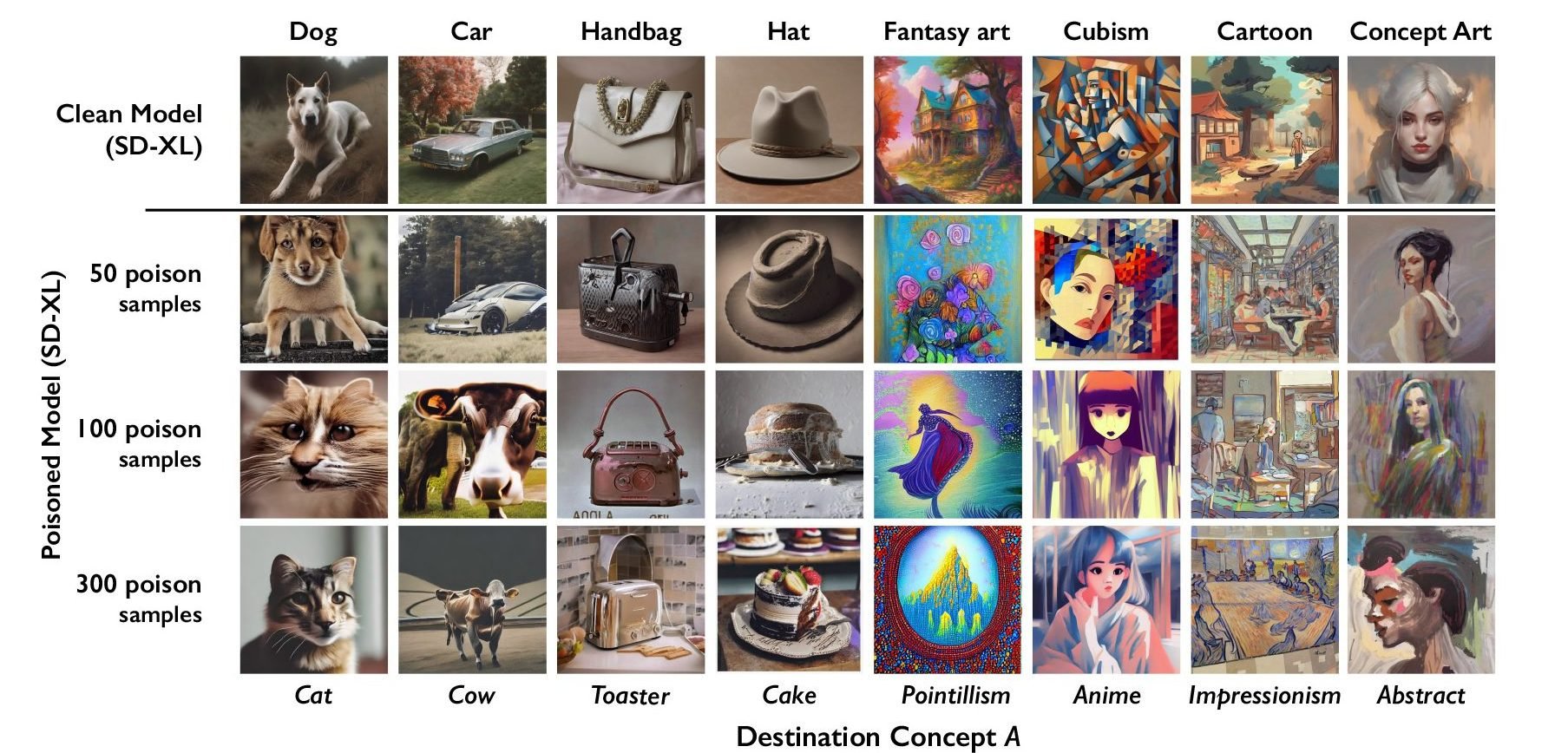

The researchers tested the attack on Stable Diffusion’s latest models and on an AI model they trained themselves from scratch. When they fed Stable Diffusion just 50 poisoned images of dogs and then prompted it to create images of dogs itself, the output started looking weird—creatures with too many limbs and cartoonish faces. With 300 poisoned samples, an attacker can manipulate Stable Diffusion to generate images of dogs to look like cats.

I’m interested to know how they fool the AI while keeping it invisible to the human eye. Do they make additional layers? Do they change every nth pixel? Is every poisoning associated with another poisoned object? (Will a dog always be poisoned towards a cat?, etc…)

Interesting, but a bit hard to understand.

deleted by creator

Disappointingly, the article only says that it “changes pixels in ways imperceptible to the human eye”

I think that is a feature

how they fool the AI while keeping it invisible to the human eye

My guess is that AI companies will try to scrape as much as possible without a human ever looking at the data.

When poisoned data start to become enough of a problem, that humans have to look over very sample, then this would increase training cost to to a point where it’s no longer worth to bother with it in the first place.

But that has absolutely nothing to do with how the mechanism works lol. Of course they are trying to eliminate data scraping, that is the whole controversy

Is this not just adversarial training/generation, but instead of using it to improve the model they just allow it to mess it up? Sorry, blanking on the exact term. My understanding was that some GANs are specifically trained on stuff like this to improve their abilites to differentiate.

deleted by creator

I absolutely love this. I’m not even an artist, but I’m giddy over this.

Don’t be too gidy, it won’t work. SD is already trained on poisoned datasets to help it differentiate poorly generated images. We call it “adversarial training”. If this was gonna stop us from making AI artwork, , it already would have.

The only solution, if there is one, is to put your art on the blockchain and specifically license against it being used without attribution on same blockchain and the find some kind of license model that trickles value up the chain.

Even that won’t work, I suspect.

Boy, these conservative srtists just keep trying, bless their little hearts. Nobody tell them adversarial training was invented by us already.